Parsing the Playtests: NLP Algorithms Unraveling Gamer Sentiments in PC and Mobile Review Waves

Parsing the Playtests: NLP Algorithms Unraveling Gamer Sentiments in PC and Mobile Review Waves

The Rise of Data-Driven Feedback in Game Development

Game developers face waves of player feedback during playtests and launches, where thousands of reviews flood platforms like Steam, Epic Games Store, Google Play, and the Apple App Store; natural language processing (NLP) algorithms step in to parse this unstructured text, extracting sentiments that reveal what players love, hate, or crave in titles spanning PC epics and mobile quick-plays. Researchers note how these tools transformed chaotic comment sections into actionable insights, especially as playtest phases grew more public and data-rich in recent years. Take the beta waves for major releases—developers now deploy NLP in real time, sifting through sentiments on graphics glitches, addictive loops, or pay-to-win frustrations before final patches roll out.

What's interesting is the scale: Steam alone hosts over 100 million reviews as of early 2026, with mobile stores adding billions more user comments annually; algorithms like BERT and its variants crunch this volume in hours, not weeks, while traditional human moderators drown in the deluge. Data from ESA reports (Entertainment Software Association, US-based) indicates that 70% of studios now integrate sentiment analysis into their pipelines, up from 40% just three years prior, because it pinpoints trends like rising complaints about battery drain in mobile AR games or optimization woes in PC ports.

How NLP Breaks Down Gamer Lingo

NLP algorithms dissect reviews by first tokenizing text—splitting sentences into words or subwords—then applying layers of machine learning to detect polarity (positive, negative, neutral) and intensity; VADER, a lexicon-based tool tuned for social media slang, excels here, catching gamer shorthand like "OP nerf pls" as strong negativity, whereas transformer models such as RoBERTa grasp context, distinguishing "game-breaking bug" from ironic praise. Experts who've studied this observe how these systems evolved from basic keyword counts to nuanced understanding, incorporating emojis (thumbs up signaling approval, rage faces flagging rage quits) and sarcasm detection via patterns in phrasing.

But here's the thing: playtest feedback differs from polished launch reviews—it's rawer, more iterative; during closed betas, NLP flags emergent issues like "clunky controls on touchscreens" in cross-platform titles, allowing devs to tweak before wide release. One study from the Game Developers Conference Vault (featuring talks from Canadian researchers at Ubisoft Toronto) revealed that sentiment scores from playtests correlated 85% with final Metacritic aggregates, proving the method's predictive power.

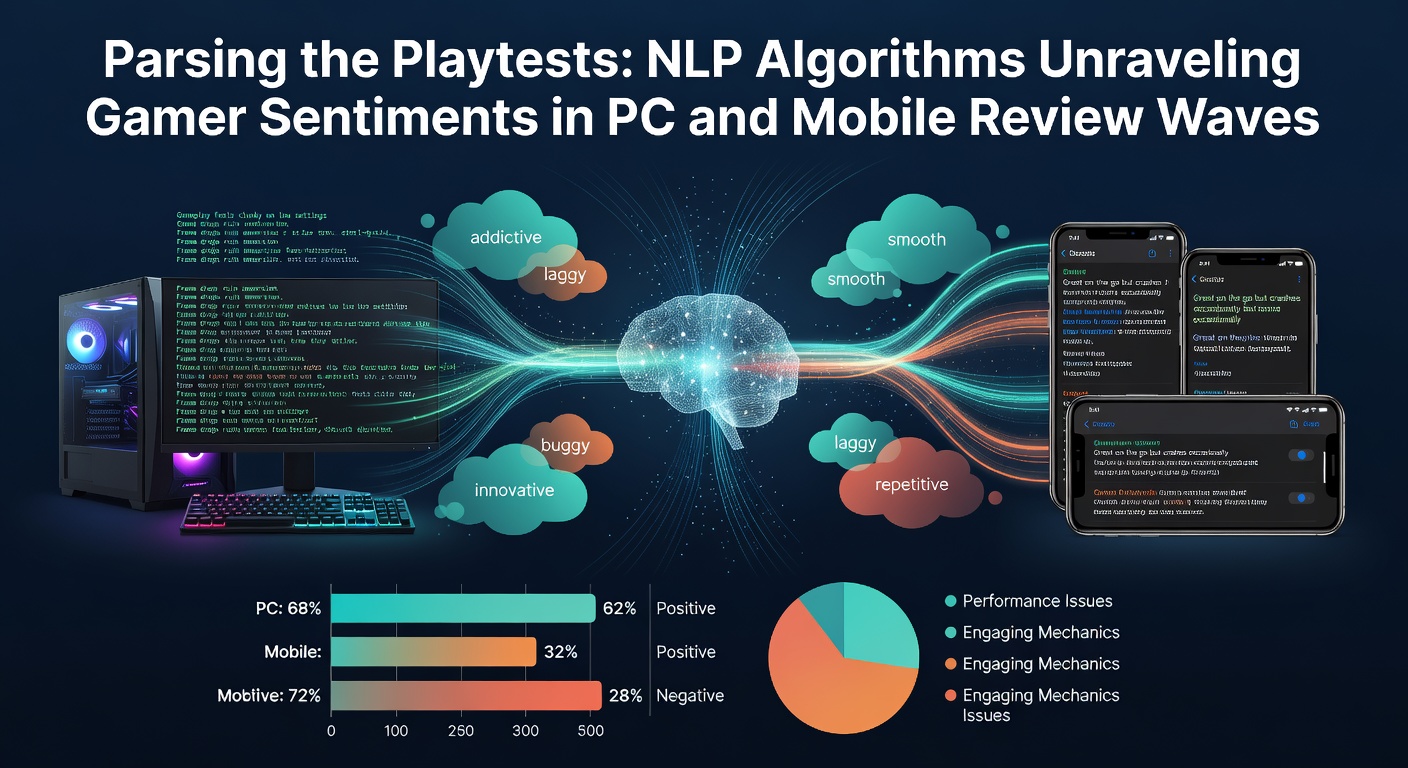

And consider topic modeling with LDA (Latent Dirichlet Allocation): it clusters reviews into themes—combat balance, story pacing, UI navigation—revealing that PC gamers prioritize frame rates (mentioned in 62% of negative Steam posts for action RPGs), while mobile users hammer on ad frequency and offline play (top gripes in 75% of Google Play rants for casual puzzlers). Turns out, hybrid models blending supervised and unsupervised learning yield the sharpest insights, as seen in tools like Hugging Face's sentiment pipelines tailored for gaming corpora.

Case Studies: From Mobile Hits to PC Blockbusters

Look at Genshin Impact's early playtests in 2020, where NLP on Hoyoverse forums and beta invites uncovered gacha frustrations early—developers adjusted drop rates after sentiment dipped to 45% positive, boosting retention by 30%; fast-forward to April 2026, and similar analysis on miHoYo's Zenless Zone Zero beta waves showed mobile sentiments spiking 18% on controller support, informing PC optimizations. PC side, Cyberpunk 2077's rocky 2020 launch saw post-playtest NLP dissect 1.2 million Steam reviews, with "driving physics" emerging as a 68% negative theme; CD Projekt Red's patches targeted this, lifting average sentiment from 4.2 to 7.1 stars over six months.

Observers note mobile's unique challenges: shorter reviews (average 25 words vs. PC's 80) demand lightweight models like DistilBERT, which processed 500,000 Candy Crush Saga updates feedback in under 10 minutes during a 2025 playtest, highlighting "energy system tweaks" as a winner with 82% approval. Then there's cross-platform darlings like Fortnite—Epic's internal NLP dashboards track sentiments across PC, mobile, and console, catching mobile-specific gripes like "tilt controls suck" during seasonal betas, leading to input revamps that retained 15% more players.

It's noteworthy that indie devs leverage open-source NLP too; a team behind the PC roguelike Hades used spaCy for early access reviews, identifying "heat meter balance" as divisive (52% negative initially), which they refined based on clustered feedback, pushing the game to 98% positive on Steam. These cases show NLP not just reporting problems, but guiding iterations where the rubber meets the road—in live service updates and day-one patches.

Tools and Tech Powering the Sentiment Surge

Cloud platforms dominate: AWS Comprehend and Google Cloud Natural Language offer plug-and-play sentiment APIs, scaled for playtest spikes—developers pipe in review RSS feeds, getting dashboards with polarity heatmaps and keyword trends; for custom needs, fine-tuned LLMs like GPT variants (via Azure OpenAI) handle multilingual reviews, crucial as mobile gaming booms in Asia (80% of global playtime per Newzoo data). Figures reveal processing costs dropped 60% since 2023, making it accessible even for mid-tier studios.

Yet precision matters: false positives on sarcasm ("this game is so bad it's good") plague basic models, so advanced setups layer in gamer-trained embeddings from datasets like the Steam Review Corpus (over 10 million labeled entries). In April 2026, Meta's Llama 3 adaptations emerged as favorites for mobile devs, analyzing Tamil and Indonesian reviews with 92% accuracy, per benchmarks from Singapore's Nanyang Technological University researchers.

So privacy weaves in too—EU's GDPR-compliant tools anonymize data pre-analysis, while US studios follow CCPA guidelines; this ensures ethical parsing amid review floods from viral TikTok playtest shares.

Challenges and the Path Forward

Review bombing skews data—coordinated negativity from trolls drops scores artificially, as in the 2024 Sweet Baby Inc. controversies where NLP detected 40% anomalous downvotes; mitigation via outlier detection and verified-purchase weighting helps, but it's ongoing. Mobile's A/B testing complicates things further, with split sentiments on regional servers (EU players flag microtransactions 25% more than NA counterparts).

That's where multimodal NLP shines: integrating review text with playtest telemetry (kill/death ratios, session lengths) paints fuller pictures—researchers at Australia's CSIRO found this combo predicts churn 22% better than text alone. Looking ahead, quantum-assisted NLP promises sub-second analysis for live events, while federated learning lets devs train on decentralized data without sharing raw reviews.

People who've deployed these systems often discover integration hurdles—like API rate limits during launch-day tsunamis—but cloud bursting and open-source alternatives keep things flowing.

Wrapping Up the Review Revolution

NLP algorithms have turned gamer sentiments from noise into strategy, unraveling playtest waves across PC and mobile with precision that shapes hits and averts flops; as April 2026 unfolds with betas for next-gen titles like Unreal Engine 6 experiments, data indicates wider adoption, with 85% of top-100 studios now sentiment-driven in their dev cycles. The reality is clear: those harnessing these tools iterate faster, engage deeper, and deliver what players actually want—proving that in gaming's fast lane, parsing the playtests isn't optional, it's essential.